Progress used to be glamorous. For the first two thirds of the twentieth-century, the terms modern, future, and world of tomorrow shimmered with promise.

Glamour is more than a synonym for fashion or celebrity, although these things can certainly be glamorous. So can a holiday resort, a city, or a career. The military can be glamorous, as can technology, science, or the religious life. It all depends on the audience. Glamour is a form of communication that, like humor, we recognize by its characteristic effect. Something is glamorous when it inspires a sense of projection and longing: if only . . .

Whatever its incarnation, glamour offers a promise of escape and transformation. It focuses deep, often unarticulated longings on an image or idea that makes them feel attainable. Both the longings – for wealth, happiness, security, comfort, recognition, adventure, love, tranquility, freedom, or respect – and the objects that represent them vary from person to person, culture to culture, era to era. In the twentieth-century, ‘the future’ was a glamorous concept.

Joan Kron, a journalist and filmmaker born in 1928, recalls sitting on the floor as a little girl, cutting out pictures of ever more streamlined cars from newspaper ads. ‘I was fascinated with car design, these modern cars’, she says. ‘Industrial design was very much on our minds. It wasn’t just to look at. It was bringing us the future.’

Young Joan lived a short train ride from the famous 1939 New York World’s Fair, whose theme was The World of Tomorrow. She went again and again, never missing the Futurama exhibit. There, visitors zoomed across the imagined landscape of America in 1960, with smoothly flowing divided highways, skyscraper cities, high-tech farms, and charming suburbs. ‘This 1960 drama of highway and transportation progress’, the announcer proclaimed, ‘is but a symbol of future progress in every activity made possible by constant striving toward new and better horizons.’

‘All I wanted to do,’ Kron says, ‘was go into the World of Tomorrow.’ She wasn’t alone. Anticipating a bright future was a defining characteristic of the era, especially in the United States.

When Disneyland opened in 1955, Tomorrowland embodied the promise of progress. A plaque at the entrance announced ‘a vista into a world of wondrous ideas, signifying man’s achievements . . . a step into the future, with predictions of constructive things to come.’

Back then, the Year 2000 and the Twenty-first-century were glamorous destinations. Newspaper features and TV documentaries described a future filled with barely imaginable wonders. A 1966 New York Times article titled ‘A Glimpse of the 21st Century’ predicted ‘a world virtually free of deserts, smog and engine noise, comfortably supporting a population ten times that of today – from 25 to 50 billion’, thanks to abundant nuclear power.1 There would be resorts on the bottom of the ocean and underground conveyor belts transporting bulk cargo. Underground electric trains would whisk passengers from city to city while electric air buses carried them across town. These were not idle speculations, the Times assured readers, but serious scientists’ efforts ‘to extend present trends a few decades into the future’.

As a child, I felt lucky to be born in 1960. I’d be only 40 in the year 2000 and might live half my life in the magical new century. By the time I was a teenager, however, the spell had broken. The once-enticing future morphed into a place of pollution, overcrowding, and ugliness. Limits replaced expansiveness. Glamour became horror. Progress seemed like a lie.

Much has been written about how and why culture and policy repudiated the visions of material progress that animated the first half of the twentieth-century, including a special issue of this magazine inspired by J Storrs Hall’s book Where Is My Flying Car? The subtitle of James Pethokoukis’s recent book The Conservative Futurist is ‘How to create the sci-fi world we were promised’. Like Peter Thiel’s famous complaint that ‘we wanted flying cars, instead we got 140 characters’, the phrase captures a sense of betrayal. Today’s techno-optimism is infused with nostalgia for the retro future.

But the most common explanations for the anti-Promethean backlash fall short.2 It’s true but incomplete to blame the environmental consciousness that spread in the late sixties. Rising living standards undoubtedly led people to value a pristine environment more highly. But environmental concerns didn’t have to take an anti-Promethean turn. They might have led instead to the expansion of nuclear power or the building of solar energy satellites. Cleaning up smoggy skies and polluted rivers could have been a techno-optimist enterprise. It certainly didn’t require curtailing space exploration. Eco-pessimism itself needs a fuller explanation.

Nor can popular entertainment shoulder the blame. Outside of niche books and magazines, the golden age of optimistic science fiction did not exist. Postwar sci-fi movies were dominated by plots about the monstrous effects of radiation and other technologies gone awry. Susan Sontag even wrote a highbrow essay about them in 1965, ‘The Imagination of Disaster’. Through these films, she wrote, ‘one can participate in the fantasy of living through one’s own death and more, the death of cities, the destruction of humanity itself’. Latecomer 2001: A Space Odyssey (1968) and short-lived Star Trek (1966–69) were the exceptions, Godzilla (1954), Them! (1954), and Forbidden Planet (1956) the rule. Cautionary works of science fiction terror coexisted with mid-century progress glamour.

The movie that best demonstrates how the old future lost its allure isn’t science fiction. Its plot has nothing to do with technology. It isn’t scary or dystopian. It’s funny and knowing and the top–grossing movie of 1967. The Graduate racked up seven Oscar nominations and made Dustin Hoffman a star. A famous scene punctures progress glamour by making it look stodgy and ridiculous.

A friend of his parents takes Ben (Hoffman) aside at his college graduation party, intent on telling him something very important, something so momentous they must leave the room to find a quiet place out by the pool:

‘I just want to say one word to you, just one word.’

‘Yes, sir.’

‘Are you listening?’

‘Yes I am.’

‘Plastics.’

‘Exactly how do you mean?’

‘There’s a great future in plastics. Think about it. Will you think about it?’

‘Yes, I will.’

‘Shhh. Nuff said. That’s a deal.’

With their modern home and promising son, Ben’s parents are living the California dream, experiencing a future more abundant than even the World’s Fair Futurama promised. But neither Ben nor the movie’s audience finds that future enticing. It’s just life, and a meaningless, superficial one at that. More of the same sounds awful. There’s a great future in plastics. No thank you.

To someone like Mr. Maguire, recalling the yearnings of his own Depression-era youth, a career in plastics might represent security and recognition: an alluring future of material success. To Ben, it’s banal, the antithesis of glamour.

The word glamour originally meant a literal magic spell that made people see things that weren’t really there. In its contemporary sense, glamour still contains an illusion. It obscures difficulties, flaws, and costs. It hides boredom and pain. It seems effortless. The beach holiday entails no delayed flights, the electric car charges instantly, the expansive windows require neither cleaning nor curtains, the high heels never pinch. Glamour imagines a world without imperfections or trade-offs.

That artificial grace gives progress glamour its utopian quality. ‘The theme of abandoning a repellent present reality and escaping into a distant ideal is one that has shaped all visions of future communities’, write Joseph J Corn and Brian Horrigan in Yesterday’s Tomorrows: Past Visions of the American Future, published in 1996 and still the best work on the subject.

Consider the most powerfully glamorous images from the high period of progress glamour, extending from the 1920s to the mid-1960s. They fall into several distinct categories: 1) effortless rapid transportation, which starts with automobiles and diesel trains and continues to jets and spaceships; 2) cities that appear orderly and clean; 3) luxurious domestic contentment without drudgery or want. All these images offer alternatives to a world in which ordinary people were isolated on farms or crowded into rapidly growing cities, where serious material privation was still a recent memory if not a daily reality, and where work, whether household or paid labor, was physically grueling and often mind-numbingly dull.

Glamour invites projection by giving the audience just enough information to engage the imagination, allowing scope for the viewer’s own fantasies. Mystery and distance are essential. Too much knowledge breaks the spell. Your dream job may be rewarding once you have it, even fun at times, but it’s no longer glamorous. You know too much about the tedious, frustrating, or annoying details.

To understand the disillusionment with progress that took hold a half century ago, we have to understand why progress seemed glamorous in the first place and what its grace obscured. What exactly was its allure and to whom? What was the nature of its illusion? Only then can we consider how progress might recover its glamour and what the risks might be if it does.

The glamour of progress in the twentieth-century emerged from audiences inspired by three different kinds of longing. At a given moment, an individual might partake in any one of the three, but they also formed distinct audience clusters whose different underlying desires matter to our story.

Together these groups constituted a large enough public to make imagining a marvelous future the cultural norm. Eventually, their diverging aspirations produced real-world consequences, dispelling the futuristic glamour of progress.

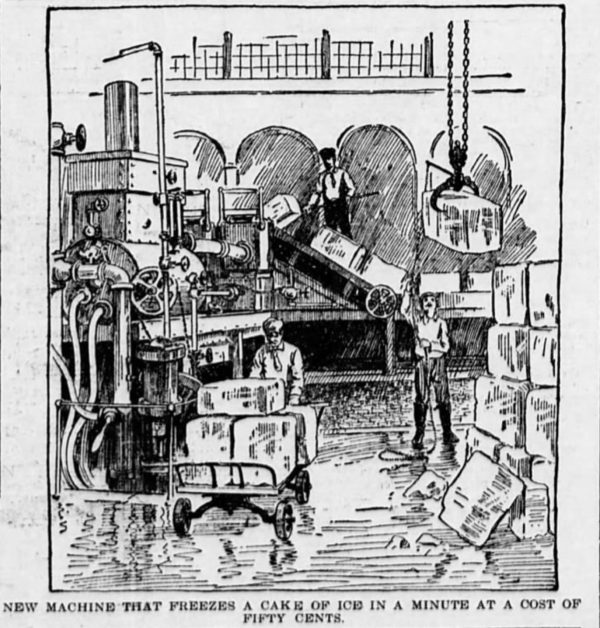

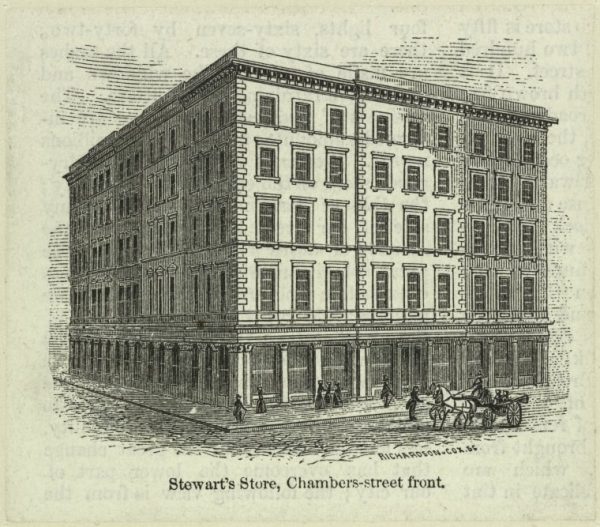

One audience longed for order, cleanliness, efficiency, and speed: an escape from the dirt, disorder, and constraining clutter of the past. ‘Speed is the cry of our era’, wrote industrial designer Norman Bel Geddes in 1932. Ranging from Bauhaus purists to Hollywood set designers, these were the modernists, the most politically and culturally influential of the three clusters. They sought to create plenty by eliminating waste, a category that included everything from ornate furniture and fussy wallpaper to the price system and ‘duplicative’ economic competition. Their desires expressed themselves in buildings of steel, concrete, and glass; in streamlined artifacts and open-plan interiors; in slum clearances and superhighways; and in schemes for economic planning and control. The Futurama, designed by Bel Geddes, embodied a popular version. Le Courbusier’s Radiant City of concrete housing towers sharing green space was a highbrow incarnation.

A second audience craved discovery, adventure, and meaningful achievement. Like Tennyson’s Ulysses they longed ‘To follow knowledge like a sinking star, / Beyond the utmost bound of human thought’. Dissatisfied with mass culture, they yearned for excellence. They saw in astronauts and scientists the glamour formerly reserved for heroic explorers, courageous sea captains, and western gunfighters.3 This form of futuristic glamour operated most powerfully on a relatively small portion of the population – those who, in an era that celebrated the affable average man, were out-of-step and excessively brainy.4 This was, in short, glamour for nerds (including little Virginia reading You Will Go to the Moon every day in kindergarten).

It was the third, far larger, audience that made progress glamour a cultural norm. These were the vast numbers of ordinary people who longed for security, comfort, and ease, a respite from struggle, drudgery, and want. Once they might have dreamed of the sudden bounty of old novels: inheriting an income from an aristocratic secret father or discovering a long-lost millionaire uncle.

In the early twentieth-century, however, new forms of luxury injected a note of realism into glamorous visions of the good life. The country estates, servants, and jewels of the wealthy might still be out of reach, but new technologies got cheaper over time. These luxuries didn’t depend on scarcity for their value. Radios and record players brought music into homes without pianos. Movies offered cheap theater tickets. The Model T and its successors gave ordinary people the swift, private transportation once reserved for the coach-owning elite.

The cinema provided an everyday vision of this futuristic glamour. ‘The women were always dressed in furs and fancy hats and lived in lovely homes and got refrigerators! I mean we hadn’t got a refrigerator!’ recalled a British woman interviewed in the 1990s about her memories seeing movies in the 1930s. ‘When we went to the cinema and [saw] people switching lights on and opening fridges and hoovering, it was a different world. I think it made us all a bit more ambitious.’ For young working-class women, electric lights, refrigerators, and vacuum cleaners were glamorous. ‘I’ll live like a princess in a house that runs like magic!’ exulted a woman envisioning her ‘post-war dream’ in a magazine ad from the American Gas Association. Progress meant more than rocket ships and skyscrapers. It brought appliances and indoor plumbing. Progress meant an end to everyday drudgery.

Kron, whose New York family lived a few decades ahead of their British counterparts, recalls how a ‘cute refrigerator with round edges’ replaced the family’s ‘klutzy’ icebox and a single telephone on the kitchen wall was joined by additional extensions. ‘These things were glamorous’, she says, ‘not just because of the way they looked but because of what they brought to us. They brought us a better, more interesting life.’

The progress glamour of the postwar period was heightened by recent lived experience. Ordinary people were enjoying material abundance – clean new suburban homes, new clothes made from easy-care fabrics, new televisions and automobiles and kitchen gadgets. ‘Push-button controls’ weren’t just a way of switching things on. They were a metaphor for a new life of ease. If twentieth-century life was this good, surely twenty-first-century life would be fantastic. At least that’s how the prewar generation thought.

When the glamorous future becomes the real-life present, however, it loses its mystery and reveals its flaws, especially to those who have never known any other way of life. Sure, running a vacuum cleaner is easier than beating a rug or scrubbing a floor, but cleaning still feels like a never-ending chore. No house runs on magic. Over time, experience dispelled the glamour of progress. A new generation wasn’t longing for more of the same.

Experience also challenged the prevailing concept of pro-gress by revealing its drawbacks. Take food, which twentieth-century advances made abundant and convenient. In 1954, Philip Wylie, a famous curmudgeon, published an Atlantic essay titled ‘Science Has Spoiled My Supper’. Wylie lamented the results of scientific farming and industrial food production. ‘What they’ve done is to develop “improved” strains of things for every purpose but eating’, he wrote. ‘They work out, say, peas that will ripen all at once. The farmer can then harvest his peas and thresh them and be done with them. It is extremely profitable because it is efficient. What matter if such peas taste like boiled paper wads?’

By the 1970s, the generation raised on convenience foods would include rebels like restaurateur Alice Waters (born in 1944) who changed the way Americans ate. Food tastes much better nowadays, but it lacks the Space Age glamour of TV dinners, freeze-dried coffee, and Tang. The ideology of ‘slow food’ celebrates fresh local ingredients and decries large-scale agriculture. It positions itself as a critique of twentieth-century ‘progress’.

But the abundant out-of-season produce today’s shoppers expect to find in their supermarkets owes much to free trade policies and logistical innovations like shipping containers. Our better food comes from distinctly modern systems. For all their counterculture roots, French press coffee, balsamic vinegar, and everyday avocados represent genuine progress.

Here we see the conflict between two different concepts of progress. In one, progress is an open-ended process of decentralized trial-and-error learning, where each new advance begets criticism and further improvements.5 Wylie’s mass-produced peas represented genuine advances, enabling far more people to eat vegetables year-round. But their taste left much to be desired. The food industry still had work to do.

This idea of progress acknowledges that as soon as we have something, however well it meets our original desires, we see its flaws. ‘Form follows failure’, in the words of civil engineering professor Henry Petroski. Dissatisfaction drives progress. ‘Since nothing is perfect’, writes Petroski, ‘and, indeed, since even our ideas of perfection are not static, everything is subject to change over time. There can be no such thing as a “perfected” artifact; the future perfect can only be a tense, not a thing.’ In this concept of progress, glamour may inspire advancements, but it doesn’t survive their realization. New longings, derived from new forms of discontent, may then yield new glamorous concepts of the future, fueling further improvements.

That’s not how the modernists saw progress. In liberal democracies and totalitarian regimes, on the left, right, and center, among government planners and corporate efficiency experts, modernists imagined progress as aiming toward the one best way. Although they might disagree with each other, they were confident that they knew in advance what the future should look like. The modernist yearning for efficiency, order, and speed provided the leading ideology of progress in the twentieth-century and much of its enduring visual vocabulary.6 When today’s critics equate techno-optimism with techno-fascism, they are channeling this association: Progress means smart people sweeping away the past and deciding how everyone will live.

The modernist future ideally started from scratch – the better to create order – and moved inexorably toward a perfected state. It eliminated the waste of variety and the messiness of individual desires. Like visitors to the Futurama, modernists imagined progress from a god’s-eye perspective. In his semi-autobiographical novel World’s Fair, EL Doctorow describes the exhibit as ‘a toy that any child in the world would want to own. You could play with it forever. The little cars made me think of my toy cars when I was small . . . The buildings were models, it was a model world.’ But life takes place on the ground, where details and personal preferences matter. Futurama’s enthusiastic crowds never considered what it might feel like to be the planners’ toy.

In the 1950s and 1960s, cities began bulldozing working-class neighborhoods to make way for the highways, civic complexes, and public housing towers that looked so appealing in modernist depictions. ‘It’s what they call Progress, and put that with a capital P. You can’t stand in the way of Progress,’ said a Memphis, Tennessee, barber in 1963, repeating a common litany of the era. The city had slated his shop, the oldest in town, for demolition. ‘Urban renewal, you know’, he said. ‘They’re tearing down everything to build something better.’ What they built was one of those cement-heavy civic plazas that look sleek in architectural models and are grim in person. By the late 1960s, many people had had enough of that kind of Progress with a capital P.

The unwieldy collection of movements known as the counter-culture was the most obvious sign of resistance. It was a product of mass affluence, comprising the children who’d grown up in the real-world version of the Futurama and disdained a future of plastics. They took abundance for granted. Exalting nature and rejecting artifice, the counterculture’s attitudes harked back to Rousseau and the anti-industrial Romantics but with a distinctive twentieth-century twist.

Theodore Roszak, who coined the term counterculture in his 1969 book The Making of a Counterculture, later defined its unifying characteristic as ‘the rebellion against certain essential elements of industrial society: the priesthood of technical expertise, the world view of mainstream science and the social dominance of the corporate community’. The counterculture took many forms, from hippie communes to New Left demands for ‘participatory democracy’. It included anti-war protests and calls for women’s liberation, along with plenty of sex, drugs, and rock and roll. Politically and culturally, the counterculture repudiated the reigning notions of progress. It was anti-modernist.

‘In the technocracy, nothing is any longer small or simple or readily apparent to the non-technical man’, wrote Roszak. ‘Instead, the scale and intricacy of all human activities – political, economic, cultural – transcends the competence of the amateurish citizen and inexorably demands the attention of specially trained experts’. Technocrats might consider themselves benevolent forces, extending prosperity to the masses, he argued, but they exercised ‘totalitarian control’.

The counterculture rejected claims to expertise, whether by city planners, industrial corporations, research scientists, or mainstream medicine. It longed for a society anyone could understand. It decried specialization. Even the counterculture’s technophilic version, represented by Stewart Brand and The Whole Earth Catalog, sought individual ‘access to tools’. The personal computer, not the corporate mainframe, embodied its idea of progress.

The counterculture’s antagonism to big business and scientific expertise largely explains why ecological consciousness took an anti-Promethean turn. Solving discrete environmental problems means further empowering people with specialized knowledge. Embracing spiritual practices, celebrating the wilderness, demanding vegetables raised without pesticides, and protesting nuclear power are things anyone can do. The anti-Promethean turn encouraged people to trust their instincts: to protect what they treasured and ban what they feared, regardless of what experts said.

Meanwhile, the writings of Jane Jacobs informed a new intellectual appreciation for the qualities that evolved without top-down planning in urban neighborhoods. Jacobs emphasized the evolutionary nature of cities and the vitality of urban neighborhoods if allowed to grow and change with social and economic circumstances. Where modernists saw messiness, she saw the subtle orders of everyday interactions. ‘Reformers have long observed city people loitering on busy corners, hanging around in candy stores, and bars and drinking soda pop on stoops, and have passed a judgment, the gist of which is: “This is deplorable! If these people had decent homes and a more private or bosky outdoor place, they wouldn’t be on the street!”’ she wrote. ‘This judgement represents a profound misunderstanding of cities.’ Bustling sidewalks, shops opening onto the street, and people hanging out on front steps provide a sense of safety, she observed, through ‘eyes on the street’.

Embraced (and derided) by people on both left and right, her view of cities exemplified what classical-liberal economist FA Hayek called ‘spontaneous orders’. Here, order emerges not from top-down plans but from gradual adaptations that incorporate decentralized, often unarticulated, knowledge of desires, possibilities, and trade-offs. People demonstrate their love of bustling sidewalks by populating them. Building uses change with economic and cultural circumstances. Industrial lofts become artists’ apartments; churches become coworking spaces; storefronts become churches; banks become apartment buildings. Prices rise and fall, reflecting changes in what people actually want rather than what they’re allowed to have.

Jacobs was particularly critical of the glamour of ‘garden cities’ where large towers surrounded by green space would replace the hustle and bustle of urban sidewalks. In a 1958 article titled ‘Downtown Is For People’, she wrote that such projects

will be spacious, parklike, and uncrowded. They will feature long green vistas. They will be stable and symmetrical and orderly. They will be clean, impressive, and monumental. They will have all the attributes of a well-kept dignified cemetery…

We are becoming too solemn about downtown. The architects, planners – and businessmen – are seized with dreams of order, and they have become fascinated with scale models and bird’s-eye views. This is a vicarious way to deal with reality, and it is, unhappily, symptomatic of a design philosophy now dominant: buildings come first, for the goal is to remake the city to fit an abstract concept of what, logically, it should be.

Instead of thinking about buildings, Jacobs proposed taking a careful look at ‘what people like’ about existing cities and seeking to strengthen those characteristics. ‘Let the citizens decide what end results they want, and they can adapt the rebuilding machinery to suit them’, she wrote. ‘If new laws are needed, they can agitate to get them.’

As the grassroots backlash against urban renewal schemes grew, however, it inspired laws that choked off the very dynamic processes Jacob had celebrated. Citizens, it turned out, often simply wanted to preserve the status quo. Historic preservation laws limited demolishing or in some cases even renovating old buildings. Where once city governments razed whole neighborhoods, now procedures for citizen comment shifted the default toward blocking even small private projects. The shift gave small groups veto power, overriding both democratic representation and market processes.

Using planning hearings and lawsuits, homeowners turned participatory democracy into a powerful defense against change. Sometimes they blocked projects altogether. Sometimes they simply delayed them until they became financially unviable. Many of today’s obstacles to building new housing and environmentally friendly infrastructure originated in the backlash against the hidden costs of modernist progress glamour.

Since the 1980s, technological progress has enjoyed a few flickers of glamour, notably around the singular figure of Steve Jobs, who brought computing power into the everyday lives – and eventually the pockets – of ordinary people. Jobs fused countercultural allegiances with modernist design instincts, technological boldness, and capitalist success. Most important, he gave people products that they loved.

The outpouring of public grief at his death in 2011 demonstrated his power as a symbol. As Meghan O’Rourke wrote in The New Yorker, ‘We’re mourning the visionary whose story we admire: the teen-age explorer, the spiritual seeker, the barefoot jeans-wearer, the man who said, “Remembering that you are going to die is the best way I know to avoid the trap of thinking you have something to lose.”’ Jobs embodied a new ideal of progress, at once uncompromising and humanistic, a vision of advancing technology that artists could embrace. (That the hippie capitalist could be a tyrannical boss and neglectful father were details obscured by his glamour.)

Jobs also helped to deliver on one of the touchstone technologies of twentieth-century progress glamour, a technology almost as evocative as flying cars. The twenty-first century kept the promise of videophones, and they turned out to be far better than we imagined. Instead of the dedicated consoles of The Jetsons, Star Trek, and the 1964 World’s Fair, we got multifunctional pocket-sized supercomputers that include videophone service at no additional cost. ‘I like the twenty-first century’, I tell my husband on FaceTime. But, like refrigerators, videophones aren’t glamorous when everybody has one. They’re just life. We complain about their flaws and take their benefits for granted.

Today’s nostalgic techno-optimists want more: more exciting new technologies, more abundance, and more public enthusiasm about both. Mingling the desires of the old modernists for newness, rational planning, and speed with those of the old nerds for adventure and discovery, they long for action. Their motto is Faster, please, a phrase popularized by Instapundit blogger Glenn Reynolds and the title of James Pethokoukis’s Substack newsletter.

These are niche enthusiasms, however, just as they were in the twentieth century, and techno-optimists won’t enlist the general public as long as they look backward for cultural inspiration. If popular enthusiasm could be easily stoked by blockbuster movies featuring heroes with cool gizmos, Iron Man and Black Panther would have done the trick.7 Techno-optimism wouldn’t seem so countercultural.

Twentieth-century progress glamour answered twentieth-century longings. The counterculture attack on it responded to alternative desires. Contemporary longings are different from both, deriving from internet culture and heightened competition. Any hope to restore glamour to progress must start by understanding what today’s audiences yearn for and what they want to escape.

Speaking for many, University of Southern California student Melody Guo wrote in 2023 of her generation’s desire for ‘liberation from the attention economy, from the atomization of society caused by excessive individualism and the loss of the real for the fake’. They yearn, she says, to escape the demand to constantly publicize one’s existence via social media. No longer simply a conformist drive to keep up with the Joneses, today’s rat race is a desperate attempt to attract their attention. Guo imagines a new sort of counterculture, emphasizing in-person relationships and functional, trustworthy institutions.

Instead of liberation from want and drudgery or from conformity and repression, many today long for escape from the always-on attention economy, the perceived superficiality of virtual relationships, and the relentless, zero-sum striving for credentials and achievement. They hunger for efficacy, tranquility, and intimate connection. They want to feel accomplished, confident, and valued, without the demand to be extraordinary. Rather than imagining themselves as billionaire entrepreneurs, sports superstars, or multi-platform influencers, many crave the chance to be content – even proud – as Ordinary Barbie or Just Ken. The authentic and average have become paradoxically glamorous.

The yearning to feel capable and in control shows up all over contemporary culture. We see it in the growth of cooking classes and of mixed martial arts, of weight lifting and craft hobbies, even blacksmithing. ‘It’s all things that make us use our hands, make us use tools, make us master something that seems beyond us at first’, Elizabeth Kronfield, director of the School for American Crafts at the Rochester Institute of Technology, told The New York Times. Here mastery is a goal in itself, rather than an economic imperative or another form of competition. Community arises from sharing tips and tools, time and space.8

Despite their variety, all these pursuits not only reflect common underlying desires but also sacrifice qualities once assumed to be universal goods. They are inconvenient, time-consuming, and often physically uncomfortable. While enthusiasts may not reject convenience, speed, or comfort elsewhere in their lives, they’re willing to give them up in pursuit of other satisfactions.

The variety of these pursuits also highlights a significant social shift. We no longer live in an economy where mass production dictates lowest common denominator peas. Genuine progress, and any new embodiments of progress glamour, must avoid the fatal fallacy of twentieth-century modernism. There is no one best way. People have different gifts, different tastes, different values, different histories. Even if they share the same abstract longings, they will channel them in different ways. Mixed martial arts and cooking classes both offer efficacy and community but they appeal to different personalities.

Making the future alluring requires more than reconstituting twentieth-century visions with more greenery. It means understanding an ‘abundance agenda’ as a way to provide a common substrate for many different versions of the good life: not the future but many futures.

Take the housing theory of everything. Delivering on the promise of affordable modern homes was one of the great achievements of the mid-twentieth century, and one of the reasons that progress felt real to its denizens. Today the terrible burden of housing costs crushes the optimism of the young. It stokes feelings of economic desperation even among the well paid.

Making family-sized dwellings abundant and thus affordable for most people would be the single most effective step toward restoring faith in progress. People in every time and place cherish the value of home – of a place to call their own and in which to make a life. Although a dwelling alone cannot supply the full meaning of home, it provides the necessary personal space.

The caveat is that not everyone wants to live in a dense city neighborhood. Housing abundance does not necessarily mean density. Turning the insights of Jane Jacobs into a new version of the one best way replicates in new forms the errors her work corrected. A truly abundant future makes room for homes in cities and suburbs, small towns and farms and wide open spaces. It incorporates diverse living arrangements: families large and small, nuclear and extended; clusters of households sharing common interests and facilities; integration of home, work, shopping, and services; whatever people desire, imagine, and build. It is pluralist and flexible. Rather than dictate artificial scarcity, it respects what prices tell us about supply and demand. Abundant housing provides the background that allows many different versions of the pursuit of happiness.

A more resilient idea of progress also makes room for heritage and history. It doesn’t demand a blank slate. The idea of starting from scratch may be glamorous, but it’s neither realistic nor humane. ‘The dream of finding a scratch line, to serve as a starting point for any “rational” philosophy is unfulfillable’, philosopher of science Stephen Toulmin wisely observed. ‘There is no scratch.’ Our thought is a product of inherited ideas, however we may revise them, and our environments bear the marks of our predecessors as well. Most important, few human beings want to live cut off from the legacies of the past – art and architecture, customs and family lore. If your glamorous ideas of the future include no traces of history, you’re doing it wrong.

In his 1995 novel The Diamond Age, Neal Stephenson explores how extreme material abundance might coexist with – or even create – pluralist communities. My candidate for the best science fiction novel addressing current and near future social dilemmas, The Diamond Age portrays a world in which nanotech matter compilers, or MCs, can make just about anything on demand. The transformation wrought by nanotech has split global society into self-regulating tribes, or phyles. An unaffiliated underclass (thetes) live chaotic lives. Matter compilers provide their basic material needs but they lack the social structure and protection of a phyle.

In one section the child protagonist Nell and her brother Harv, who have lived only in the world of thetes, go looking for one of their mother’s former boyfriends. He has joined a phyle called Dovetail, whose identity they find hard to comprehend:

‘We make things,’ the woman said, as if this provided a nearly perfect and sufficient explanation of the phyle called Dovetail . . . ‘My name’s Rita, and I make paper.’

‘You mean, in the M.C.?’

This seemed like an obvious question to Nell, but Rita was surprised to hear it and eventually laughed it off. ‘I’ll show you later. But what I was getting at is that, unlike where you’ve been living, everything here at Dovetail was made by hand. We have a few matter compilers here. But if we want a chair, say, one of our craftsmen will put it together out of wood, just like in ancient times.’

‘Why don’t you just compile it?’ Harv said. ‘The M.C. can make wood.’

‘It can make fake wood,’ Rita said, ‘but some people don’t like fake things.’

Voluntarily foregoing the convenience of ubiquitous matter compilers for the satisfactions of traditional craft is a response to abundance. The people of Dovetail aren’t materially deprived, nor do they completely eschew MCs. They have chosen a life where they find beauty and meaning in the work of their hands. In a world where the handmade is special, that work also has a market. Dovetail offers a glamorous possible future, at least to some.

Yearning for such background abundance underlies much of the universal basic income’s allure today. It’s a policy wonk’s version of the matter compiler, a glamorous concept of ubiquitous plenty masquerading as an anti-poverty program. The UBI offers a tantalizing vision of security: enough income to support doing whatever gives you satisfaction, regardless of economic conditions. Stephenson both highlights and punctures that glamour. Only in disciplined tribes, he suggests, does the MC support a satisfying life. Thetes enjoy material security without structure, attachments, or work – without, in other words, the activities and relationships that give life meaning.

How exactly today’s longings might manifest themselves, whether in glamorous imagery or real-life social evolution, is hard to predict. But one thing is clear: For progress to be appealing, it must offer room for diverse pursuits and identities, permitting communities with different commitments and values to enjoy a landscape of pluralism without devolving into mutually hostile tribes. The ideal of the one best way passed long ago. It was glamorous in its day but glamour is an illusion.

1 The current world population is just over eight billion.

2 Because it captures the change in cultural attitudes, I prefer Brink Lindsey’s ‘anti-Promethean backlash’ to terms such as the Great Downshift or Great Stagnation, which emphasize the slowdown in economic growth that began in 1973.

3 Evoking a long-running TV western, Gene Roddenberry famously pitched Star Trek as ‘Wagon Train to the stars’.

4 In August 1957, Margaret Mead and Rhoda Métraux published the results of a survey of American high school students in Science. When asked what they thought of scientists, students conceded their importance. ‘Without science we would still be living in caves’, was a common sentiment. But they strongly rejected the idea of becoming scientists themselves or of marrying one. Scientists were just too weird. The authors wrote, ‘The number of ways in which the image of the scientist contains extremes which appear to be contradictory — too much contact with money or too little; being bald or bearded; confined to work indoors, or traveling far away; talking all the time in a boring way, or never talking at all — all represent deviations from the accepted way of life, from being a normal friendly human being, who lives like other people and gets along with other people.’

5 This is the theme of my 1998 book The Future and its Enemies.

6 For a good discussion of the visual imagery of fascist and communist regimes, see Steven Heller’s Iron Fists: Branding the 20th-Century Totalitarian State.

7 The glamour of Afrofuturism, exemplified by Black Panther, deserves a discussion of its own.

8 Video games provide an incongruous combination of simulated achievements with genuine competence and the deep psychological pleasures known as flow. Is gaming a form of efficacy or a substitute for it? Is gamer community real?

]]>